It’s difficult to know where to begin or what to say that won’t sound insignificant or inconsequential in the current business environment. I’d be fairly certain that even the most expert risk assessment professionals, combined with the best business continuity brains in the world, would have struggled to imagine the global chaos which seems to have engulfed us all. No matter how well thought out are the contingency plans, if many businesses are closed, and many, many people are not working, then it’s difficult to see how many organisations can continue to trade effectively.

Of course the banks (yes, those banks which brought the world to its knees in 2008) smell an opportunity to ‘help out’, although many do not appear to remember the debt of gratitude they owe the millions and millions of taxpayers who bailed them out a decade or so ago when it comes to the terms on which they are prepared to hand out money now. And anyone who does most of their business in the autumn and beyond may well be rubbing their hands with glee, even if cash flow is a struggle right now. And the food sector must think it’s Christmas come early, along with the makers of toilet rolls and hand sanitisers!

But for the vast majority of individuals and businesses, there’s a nasty, deep precipice on which we’re all uncomfortably balanced, aka the final scene of the Italian Job.

If there is any kind of silver lining to be found in these dark days, then let’s hope that the realisation that:

a) It is actually possible for many of us to work from home will lead to a massive change in work culture, giving employees more flexibility and giving the environment a break as far less work-related travel takes place;

b) We can all exist without having to ‘consume’ 24x7x365, leads to a slightly less selfish society, one where community and people matter rather more than possessions.

Of course, I could take the alternative view that, forced to stay at home, we’re becoming even more used to absolutely everything being delivered to our door, so there’s no need to go out anymore, we can live, work and enjoy our leisure without engaging with the outside world, except to ‘fight’ for vital supplies.

Usually, I’m a glass half-empty kind of person, but on this one I’m optimistic that, no matter how bad it gets for individuals, businesses and whole countries, there might just be a recognition as to what truly matters in life.

One thing is for sure, that recovery from the pandemic of 2020 will take rather longer than picking up the pieces after the financial crash of 2008.

Please note: Pretty much all of the articles in this issue were written ‘pre-coronavirus’, so bear that in mind when it comes to their content.

The choice of Healthcare as a focus for some articles in this issue was made back in the mists of 2019 – so, we were planning to cover this industry in any case – we’re not jumping on the bandwagon!

New research from IDC and Micro Focus forecasts that global business is approaching a digital transformation tipping point.

By 2023:

While DX has at times been seen as something of a buzzword for IT, it is now regarded by most organisations as an essential business mandate and will soon become the default operating mode. With the data being created globally growing at a CAGR of 25.8%, businesses pursuing DX are developing systems to manage and exploit the opportunity: modern systems of record to ensure that data is accessible and AI/ML to efficiently utilise it.

The new competitive advantage identified by this research is found in turning data into intelligence through a digital transformation platform which underpins all business systems. This stands in contrast to existing DX approaches which address specific functionalities. By 2025, it is expected that 80% of organisations will measure customer outcomes in terms of their interaction with a whole ecosystem, taking the strength of information flow through the network into account.

The key differentiator for businesses in this landscape, according to Joe Garber, Global Head of Strategy & Solutions at Micro Focus, will be ‘digital determination’: the capacity and dedication to see through end-to-end DX projects. “Although core business systems are the lifeblood of the organisation, only five percent of the organisations we studied have built enduring strategies of DX success. Rather than adopting a rip-and-replace strategy that can yield unacceptable risk, we are seeing that most businesses need to instead pursue an assertive modernisation strategy.”

While DX is rapidly becoming the new norm, only 5.1% of the organisations studied had already realised sustainable performance excellence competencies around new digital technologies and business models. ‘Businesses cannot afford the risky and time-consuming proposition of digitally transforming from scratch,’ continued Garber. “They need to run and transform at the same time. We expect that this will not be a transition stage, but the way that IT will continue to operate - and getting to that point demands determination.”.

According to a survey from global research and thought leadership organisation, Leading Edge Forum (LEF) and DXC Technology, companies have overdue homework to complete, if digital transformation is to succeed.

The study, “Connecting Digital Islands: Bridging the Business Transformation Gap,” explores where global businesses are in their transformations, and what benefits, challenges and opportunities the next decade may bring. While 79% of respondents say that they’re leveraging technology to become market leaders, Two-thirds (66%) say their mission-critical systems are so complex they are wary of changing them. A further 62% responded that they lack a common set of tools and platforms across the organization resulting in a collection of “digital islands”: units working with the right technologies but independently of each other.

The survey also found that over half of the respondents (52%) believe staff are not sufficiently using analytics to make decisions based on data insights, despite 79% claiming they are effectively using technology to grow, compete, and drive market leadership. This means insights are being missed, given 77% say the collection and use of data is built into how they compete and operate.

While enterprises have been focused on data- and technology-driven transformation for some time, survey respondents say they must get the “people and culture” aspects right for effective, long-term change in the 2020s. Significantly, 65% of the business leaders surveyed reported that employee reluctance to change work habits is a barrier to technology-enabled organizational change, while only 14% rank improving employee engagement and empowerment as their top internal priority.

More notably, 70% say more effective leadership is needed across the organization.

In the survey respondents also prioritised the emerging technologies they believe will most positively impact their organisations:

● High-speed 5G networks (60%);

● Artificial intelligence and machine learning (59%);

● Sensors and the Internet of Things (55%);

● Robots and robotic process automation (54%);

● Virtual and augmented reality (53%);

● Voice interfaces such as Alexa, Siri and Google Assist (52%);

Commenting on the research findings, Richard Davies, VP, Strategic Advisory, DXC Technology and MD at LEF, said: “This is a strong reminder that getting the right combination of people, culture and technology is essential for making effective, long-term change. Employees will also need to embrace technology-infused work cultures more strongly, and leaders must have the same priority. To close the gap, companies have to a lot of work to do — work that should have happened some time ago. This ranges from building effective leadership and internetworked teams, to modernizing IT and moving up the stack for data-driven insights, to establishing an ecosystem of partners and suppliers and instilling a culture of collaboration, learning and agility.”

The full report concludes by offering enterprises a five-step plan to help create the conditions for change if they want the 2020s to be the decade in which the cultural, technological and market barriers finally come down:

Organisations across Europe are facing a major skills challenge caused by digital transformation, with many struggling to keep pace with learning and development (L&D) needs, according to research from Skillsoft*. Carried out in the UK, France and Germany, the research found that reskilling in the face of changing and increasingly digital working environments is the biggest single issue for L&D professionals across all three countries (42 percent of respondents on average).

However, only 22 percent of respondents from across the three countries said their organisations arefully prepared to provide the new skills required by digital transformation. The UK sits below this average, with just 14 percent of organisations saying they have fully prepared employees with new skills, with France only slightly higher than the average,reporting 33 percent of organisations are fully prepared. France had the most respondents saythey are doing nothing to build digital transformation skills (20 percent), compared to Germany at three percent and the UK at one percent. In each country, most respondents believe their organisations need to do more to keep pace with digital transformation.

Despite the overwhelming response that organisations are not preparing employees for digital transformation, only half of the organisations in each country have increased investment in skills to keep pace with digital transformation (UK – 56 percent, France – 54 percent, Germany – 56 percent).

“It’s clear that across these three major territories, digital transformation is severely testing the planning, implementation and spending strategies for L&D professionals. Despite a clear trend of increasing and targeted investment, the industry faces a challenge to keep pace with the changes that digital transformation is bringing,” explainedSteve Wainwright, Managing Director EMEA at Skillsoft. “It’s vital, therefore, that we also look to technology to help build successful L&D strategies so we can all reap the benefits of this exciting era of disruptive change in the way we learn, widen our skillsets and give employees the greatest opportunity to develop,” concluded Wainwright.

Additional Research Highlights

Customer experience suffers; business opportunities missed as IT teams firefight unplanned work, finds PagerDuty research.

PagerDuty has released new research suggesting EMEA IT professionals are stressed by the increase in time-critical, unplanned work of more than 100 hours per person, per year (for 75% of respondents). The increase is impacting their ability to deliver on business priorities and assure a positive experience for customers.

In the EMEA State of Unplanned Work Report 2020, 53% of respondents in EMEA said unplanned work caused stress and anxiety for them personally (versus 61% in Asia Pacific (APAC) and a massive 80% in North America (NA)).

Disruptive, unplanned work redirects IT resources away from key responsibilities and into fire-fighting mode, making it harder for teams to deliver on business priorities and take advantage of opportunities to innovate, according to 81% of EMEA respondents (compared to 81% in APAC and 86% in NA). Adding to the pressure, 73% of EMEA respondents said they typically find out about major issues from dissatisfied customers rather than their own systems (70% in APAC and just 47% in NA) so are on the back foot before they even start resolution.

Steve Barrett, Vice President, EMEA at PagerDuty, commented, “In an always-on world, customers expect companies to deliver a perfect digital experience every time. Anything less can severely damage the bottom line. Yet in increasingly complex IT environments, it can be difficult for responders to cut through the noise and get to the issues that matter, fast. Machine learning and automation can help bring together the right people with the right information in real-time, enabling resolution in seconds or minutes rather than hours.”

Unfortunately, for many companies in EMEA, opportunities to mitigate the impacts of unplanned work through automation are being missed. 81% of EMEA respondents said their organisations have little or no automation for IT issue resolution (77% in APAC and 92% in NA).

29% of EMEA respondents said they had considered leaving their job as a result of unplanned work, creating recruitment and retention challenges for employers (31% in APAC and 38% in NA).

Barrett continued, “Companies can help improve the satisfaction and wellbeing of IT professionals by altering how, when and why individual responders are notified of IT incidents and benchmarking IT team health to ensure the effectiveness of their wellbeing strategies.”

Other EMEA findings include:

EMEA incident recovery plans are least likely to be fit for purpose

70% of respondents in EMEA said they had experienced issues that their response plans failed to account for (versus 68% in APAC and just 53% in NA).

EMEA is the worst communicator when it comes to major IT incidents

While most companies in EMEA are quick to engage IT responders in the event of a major incident, many fail to keep key stakeholders informed:

• Only 50% notify all those on relevant teams (54% in APAC and 69% in NA)

• Only 31% notify customers (43% in APAC and 47% in NA)

• Only 36% notify employees (40% in APAC and 53% in NA)

EMEA teams dismiss postmortems

EMEA is also the least likely region to maintain a constructive dialogue after the event. Only 40% of respondents in EMEA said they use incident postmortems to continuously hone their incident response (compared with 47% in APAC and 67% in NA).

52% of remote workers say they’re less likely to travel and view themselves as more productive (52%) and efficient (48%) when working remotely.

GitLab, the single application for the DevOps lifecycle and the world’s largest all-remote company, has published findings from its inaugural Remote Work Report, which surveyed 3,000 professionals from the United States, Canada, United Kingdom and Australia who work remotely or have the option to work remotely. The survey highlights the ever-increasing value employees and employers place on remote work as an alternative to traditional, in-office practices. In an era with increasing recognition and understanding that mental health and physical health directly impact employee performance, it’s undeniable that the future of work will be remote.

"We believe all-remote is the future of work, as it delivers extraordinary benefits to businesses and employees,” said Sid Sijbrandij, CEO and co-founder of GitLab. “For companies, there are unique operational efficiencies, huge cost savings on office space and a broader pool of job applicants. For employees, this structure enables off-peak lifestyles, family-friendly flexible schedules, and improved work/life harmony. We believe that a world with more all-remote companies will be a more prosperous one, with opportunity more equally distributed."

“The reality is that almost every company is already remote, whether you're working across floors or across continents,” said Darren Murph, Head of Remote at GitLab. “GitLab believes all-remote is the purest form of remote, and we're working to empower other companies to implement great remote practices. This year's report breaks down preconceived notions about remote and sheds light on its power to plant opportunities in underserved regions, make communities less transitory, and create authentically diverse teams.”

Contrary to popular belief, remote employees aren’t all traveling nomads. In fact, 52% of survey respondents reported they traveled less as remote employees. The 38% who view a lack of commute as a top benefit instead spend the time earned back from commuting with family (43%), working (35%), resting (36%), and exercising (34%). Additionally, employees find themselves overall to be more productive (52%) and efficient (48%) when working remotely.

Fourteen percent of remote workers surveyed reported they have a disability or chronic illness. Eighty-three percent of those cite remote work as a key factor in their ability to contribute to the workforce. Remote work also empowers all employees to move their organization forward. Fifty-six percent of the respondents said they feel everyone in their company can contribute to process, values, and company direction, and 50% say they default to shared documents and rely on meetings only as a last resort. Remote work levels the playing field by fostering a better sense of work-life balance and creates opportunities for everyone to contribute in the workplace.

Remote work enables employees to focus on their families without having to sacrifice their careers. Thirty-four percent of those surveyed say the ability to care for family is a top benefit of remote work, while 52% cite schedule flexibility and 38% say the lack of commute is a major benefit. Additionally, 43% reported that the absence of a commute freed up time to spend quality time with their families. Fifty-five percent of respondents have children under 18.

All-remote is the purest form of remote work, with each team member on a level playing field. Forty-three percent of remote workers surveyed feel that it is important to work for a company where all employees are remote. Currently, more than 1 in 4 respondents belong to an all-remote organization, with no offices, embracing asynchronous workflows as each employee works in their own native time zone. An added 12% work all-remote with each employee synched to a company-mandated time zone.

The benefits of remote work are only going to increase, and survey respondents agree. Eighty-six percent believe remote work is the future, but it’s also the present, as evidenced by 84% who say they are already able to accomplish all of their tasks remotely. As technology continues to improve how we communicate and how businesses operate across the globe, the need for brick-and-mortar offices and consistent, on-site attendance will continue to decrease. Remote work is here to stay, and use cases for it will only multiply

According to new research from Globalization Partners Inc., more than 90 percent of employees who work for a global organisation describe their companies as diverse. However, a lack of understanding by the organisations themselves around how to manage this growing disparate and diverse workforce means that three out of ten respondents don’t feel a sense of inclusion or belonging. This negatively impacts employee engagement, trust, happiness, as well as staff turnover.

Globalization Partners’ 2020 global employee survey, Examining the Impact of Diversity on Distributed Global Teams, asked1,725 randomly selected employees about their experience as distributed global team members. The research also uncovered that global teams are struggling to make communications work for them with 46 percent of employees still relying most frequently on email, but only 31 percent finding it effective.

Other key findings include:

●Two thirds of companies are finding it challenging to align with and be sensitive to local culture and communication styles,especially when a company spans multiple geographies.

●Organisations that embrace multilingualism are seeing better team results across the board.

●Nearly nine out of ten employees (89 percent) say their company would benefit from the assistance of outside experts to help with cultural training and cross-organisation education.

“Gartner predicts that by the end of 2022, 75 percent of organisations with frontline decision-making teams in distributed geographies and with diverse mindsets will exceed their financial targets. As a company with offices in every corner of the globe, I’ve seen the incredible business advantage in hiring and building diverse teams,” said Nicole Sahin, CEO of Globalization Partners. “I also know that now more than ever, in these trying times it is critical to have the right communication tools in place to ensure success. The employee experience matters now more than ever, and collaboration tools like Slack can make a positive impact on the ability for teams to collaborate on projects and provide a social connection for employees around the world.”

Nearly half (45 percent) say their cloud optimization is still at the ‘initial’ or ‘opportunistic’ stage.

Rising technology debt, low levels of cloud maturity and lack of in-house skills are stifling global competitiveness, innovation and speed to market, according to Avanade. A new global study has found organizations could earn an extra $1B per year in revenue and reduce operational costs by more than 11 percent through adopting a holistic approach to cloud technology, apps and modern engineering techniques – what Avanade calls being ‘ready by design.’

Avanade’s survey of more than 1,600 C-level executives revealed that just 12 percent of respondents describe their cloud capability as optimized and 45 percent say they’re still at the ‘initial’ or ‘opportunistic’ stage of their journey. Only 27 percent believing that their cloud strategy will be fully optimized by 2023. Technology debt, the costs and challenges of maintaining and integrating legacy technology, was also predicted to increase over the same period, from 17 percent to 19 percent. Around three quarters of business leaders say this drain on budgets and associated security concerns over legacy platforms is impacting speed to market (76 percent), innovation (74 percent) and their ability to retain technical professionals (74 percent).

“We know that digital disruption is pervasively impacting all industries, and it poses a very real threat, even to well established businesses,” says Adam Wengert, global applications and infrastructure lead, Avanade. “Cloud maturity is creating a two-tier business landscape with digital laggards finding themselves stagnating while their digital-native competitors are steaming ahead. The point of differentiation comes from the natives’ ability to pivot towards opportunity and away from threats within days, not months and years, thanks to their cloud-based architecture.”

One of the main causes of the debt, according to the research, is that only one fifth of applications have been rebuilt for the cloud. However, with 88 percent of respondents agreeing that innovative applications have a direct impact on growth and 94 percent identifying increasing sales and revenue as a priority over the next 12 months, it’s not surprising to see a consensus from the study around the value of cloud-based custom-built applications. Nearly 9 in 10 (89 percent) executives acknowledged that human-centred apps, those that place human needs as a higher priority, are vital for growing or defend an existing market position.

“For organizations to be successful they need to be able to move quickly to deliver customer value that can increase sales and revenue. Mobile applications are just one example of where digital natives are using human-centred apps to disrupt established markets. Mobile apps present a powerful channel to bring businesses closer to their customers, providing a more personalized service and facilitating their journeys. That is just the beginning though. Successful businesses will be those that are not afraid to experiment, that truly drive enterprise innovation and get new ideas into market faster, and more often than their competitors,” explains Wengert.

However, one obstacle that hinders an organization’s ability to seize an opportunity and experiment is the need to liberate talent to use proven modern software product engineering approaches. Around half (52 percent) of respondents cited people and skills issues as the biggest obstacle to adopting modern software product engineering practices inhouse.

“Agile, DevOps and other modern approaches will have a significant impact on improving reaction times and speed to market, making an organization more product and customer centric. However, with the lack of inhouse skills, enterprises can find themselves further paralysed, despite recognizing the need to be ‘ready by design.’ To accelerate digital maturity, enterprises need to take a holistic approach – keeping apps, cloud and people at the forefront of their business strategy; otherwise the outlook for cloud maturity and acceleration – and indeed enterprises themselves – is not going to improve,” concludes Wengert.

A survey of 400 data professionals across the US and Europe by Dataiku has revealed significant challenges in building trust in enterprise AI projects and perceptions across roles within the organisation.

Only 52 per cent of respondents said that their organisations have processes in place to ensure data projects are built using quality, trusted data. With topics like trust, explainability, responsibility, and ethics at the forefront of discussions in AI uptake, Dataiku asked respondents about how their organisations are managing these challenges.

When asked whether their organisation had processes in place to ensure data science, machine learning and AI are leveraged responsibly and ethically, fifty-seven per cent said no, or don’t know, despite thirty-five per cent saying they were working on it.

The perception of the impact of AI on people’s roles seems to differ greatly from CEO to non-management, suggesting AI projects struggle with inclusivity within organisations. Managers and C-suite executives were significantly more likely to respond that AI would “completely” (i.e., a 5 on the scale) change their company than non-managers.

On the other hand, despite the fact that non-managers in non-technical roles (business professionals in marketing, risk, operations, etc.) should see - or at least see the potential for - AI impact in their jobs, in practice, only 11 percent of non-managers in non-technical roles responded that they thought AI would “completely” change their role - a much lower percentage than the other more senior roles.

“Trust in AI projects will continue to present significant challenges if we are still tackling fundamental issues such as data quality, as well as more complex problems associated with ethics,” said Florian Douetteau, CEO at Dataiku. “Building internal trust will provide the foundation for external trust; this starts with trust in the data itself that is being used in AI systems. Data quality is one of the most basic but most important hurdles to overcome in the path to building sustainable AI that will bring business value, not risk.”

Inclusive AI encompasses the idea that the more people are involved in AI processes, the better the outcome (both internally and externally) because of a diversification of skills, points of view, or use cases. Practically within a business, it means not restricting the use of data or AI systems to specific teams or roles, but rather equipping and empowering everyone at the company to make day-to-day decisions, as well as larger process changes, with data at the core. The model today for traditional businesses leveraging AI seems to lean more toward data democratisation, or inclusive AI, for its larger potential to scale.

“It goes without saying that AI will impact individual roles, enterprises and industries, yet there are clearly some questions around trust, responsibility and inclusivity which need addressing before AI can have the optimal result,” added Douetteau.

AI Adoption in the Enterprise 2020 report finds growth in mature AI adoption; weak investment in data governance.

O’Reilly has published the results of its 2020 artificial intelligence (AI) survey, “AI Adoption in the Enterprise 2020.” The benchmark report uncovers trends in the evaluation, implementation, and outcomes of AI enterprise adoption over the past year.

Findings reveal that more than half of respondents are in the “mature” phase of AI adoption – defined by those currently using AI for analysis or in production – while about one third are evaluating AI, and 15% report not doing anything with AI. These numbers demonstrate growth when compared with O’Reilly’s 2019 AI Adoption in the Enterprise report, which found just 27% of organisations in the “mature” adoption phase and 54% in the evaluation phase.

When it comes to data governance, more than 26% of respondents say their organisations plan to institute formal data governance processes and/or tools by 2021 and nearly 35% expect this to happen in the next three years. Currently, just one-fifth of respondent organisations report having formal data governance processes and/or tools to support and complement their AI projects, similar to findings uncovered in the O’Reilly Data Quality Survey.

Difficulties in hiring and retaining people with AI skills was once again noted as a top barrier to AI adoption in the enterprise, down slightly from 18% in 2019. As in 2019, the biggest bottleneck to AI adoption was reported to be a lack of institutional support (22%), followed by “Difficulties in identifying appropriate business use cases” at 20%.

“AI practices are maturing, and adopters are experimenting with sophisticated AI techniques and tools, which bodes well for the future advancement of AI in the enterprise,” said Rachel Roumeliotis, O’Reilly Strata Data & AI conference co-chair and strategic content director at O’Reilly. “However, organisations will continue to struggle to expand and scale their AI practices if they don’t address the importance of data governance and data conditioning in ML and AI development.”

Other notable findings include:

Latest research from Neustar reveals across-the-board growth in attacks of all sizes.

Neustar says that its Security Operations Center (SOC) saw a 168% increase in distributed denial-of-service (DDoS) attacks in Q4 2019, compared with Q4 2018, and a 180% increase overall in 2019 vs. 2018. According to Neustar’s latest cyber threats and trends report, released today, the company saw DDoS attacks across all size categories increase in 2019, with attacks sized 5 Gbps and below seeing the largest growth. These small-scale attacks made up more than three quarters of all attacks the company mitigated on behalf of its customers in 2019.

DDoS attacks taking varied forms

In 2019, the largest threat Neustar mitigated, at 587 gigabits per second (Gbps), was 31% larger than the largest attack of 2018, while the maximum attack intensity observed in 2019, 343 million packets per second (Mpps), was 252% higher than that of the most intense attack seen in 2018. However, despite these higher peaks, the average attack size (12 Gbps) and intensity (3 Mpps) remained consistent year over year. The longest single, uninterrupted attack experienced in 2019 lasted three days, 13 hours and eight minutes.

Though the number of attacks increased significantly across all size categories, small-scale attacks (5 Gbps and below) again saw the largest growth in 2019, continuing the trend from the previous year. The combination of DDoS-for-hire and botnet rental services has made DDoS attacks much easier to execute, but the fact that perpetrators seem to be in many cases choosing to engage in small-scale attacks suggests that their goal may often be something other than taking a site completely offline.

“Large, headline-making DDoS attacks do still take place, but many cybersecurity professionals believe that smaller attacks are being used simply to degrade site performance or as a smokescreen for other forms of cybercrime, such as data theft or network infiltration, which the perpetrator can execute more easily while the target’s security team is busy fighting a DDoS attack,” said Rodney Joffe, senior vice president, senior technologist and fellow at Neustar. “Furthermore, with the current move of the bulk of the workforce globally to a work from home model, we expect to see a significant increase in DDoS attacks against VPN infrastructure. This risk makes an ‘always on’ DDoS mitigation service even more critical.”

In addition to conventional DDoS attacks, which seek to exhaust bandwidth, in 2019 Neustar also observed an increase in network protocol or state exhaustion attacks, which target network infrastructure directly. Volumetric attacks continued to proliferate as well, with attackers using new DDoS vectors such as Apple Remote Management Services, Web Services Dynamic Discovery, Ubiquiti Discovery Protocol and the Constrained Application Protocol.

Said Joffe, “During the shift to teleworking at scale, we would not be surprised to see the VPN protocol ports added to these targeted attacks.”

Two- and three-vector attacks ‘just right’ for attackers

In 2019, approximately 85% of all attacks used two or more threat vectors. That number is comparable to the 2018 figure; however, the number of attacks involving two or three vectors rose from 55% to 70%, with correspondingly fewer simple single-vector attacks and complex four- and five-vector attacks, suggesting that attackers have settled into the Goldilocks zone for attacks.

Security professionals continue to view DDoS attacks as a growing threat. According to the most recent Neustar International Security Council (NISC) survey, when asked which vectors they perceived to be increasing threats during November and December 2019, senior-level cybersecurity decision-makers cited social engineering via email most frequently (59%), followed by DDoS (58%) and ransomware (56%).

Web attacks increasing

2019 saw web attacks on the rise as well. Most companies recognise the danger that slow-loading websites pose to their business and attempt to protect them with web application firewalls. In the most recent NISC survey, 98% of respondents agreed that a WAF was an essential component of their security infrastructure. However, as more and more enterprises use multiple cloud providers, often involving a mix of public and private clouds, the need for consistent security across applications and platforms is growing.

“Web attacks can be difficult to track because some variation in the performance of websites is to be expected, but they are increasingly critical for businesses to address. One survey found 45% of consumers are less likely to make a purchase when they experience a slow loading website, and 37% are less likely to return to a retailer if they experience slow loading pages,” added Joffe.

A vendor-neutral cloud WAF, coupled with DDoS protection, can eliminate a large portion of threats, allowing enterprise application experts to focus their attention on the more specialised attacks. Continuous updates from a reliable threat feed can also deliver information on bad IPs and botnet command and control (C&C) sites before they are able to damage the network.

Dell Technologies Global Data Protection Index 2020 Snapshot shines light on key challenges impacting data protection readiness according to 1,000 IT decision makers across 15 countries.

The Dell Technologies Global Data Protection Index 2020 Snapshot reveals that organisations on average are managing almost 40% more data than they were a year ago. With this surge in data comes inherent challenges. The vast majority (81%) of respondents reported their current data protection solutions will not meet all of their future business needs. The Snapshot, a follow-on to the biennial Global Data Protection Index, surveyed 1,000 IT decision makers across 15 countries at public and private organisations with 250+ employees about the impact these challenges and advanced technologies have on data protection readiness. The findings also show positive progress as an increasing number of organisations – 80% in 2019, up from 74% in 2018 – see their data as valuable and are currently extracting value or plan to in the future.

“Data is the lifeblood of business and the key to an organisation’s digital transformation,” said Beth Phalen, president, Dell Technologies Data Protection. “As we enter the next data decade, resilient, reliable and modern data protection strategies are essential in helping businesses make smarter, faster decisions and combat the effects of costly disruptions.”

Costly disruptions rise at alarming rates

According to the study, organisations are now managing 13.53 petabytes (PB) of data, nearly a 40% increase since the average 9.70PB in 2018, and an 831% increase since organisations were managing 1.45PB in 2016. The largest threat to all this data seems to be the growing number of disruptive events, from cyber-attacks to data loss to systems downtime. The majority of organisations (82% in 2019 compared to 76% in 2018) suffered a disruptive event in the last 12 months. And, an additional 68% fear their organisation will experience a disruptive event in the next 12 months.

Even more concerning is the finding that organisations using more than one data protection vendor are approximately two times more vulnerable to a cyber incident that prevents access to their data (39% of those using two or more vendors versus 20% of those using only one vendor). But, the use of multiple data protection vendors is on the rise with 80% of organisations choosing to deploy data protection solutions from two or more providers, up 20 percentage points since 2016.

The cost of disruption is also increasing at an alarming rate. The average cost of downtime surged by 54% from 2018 to 2019, resulting in an estimated total cost of $810,018 in 2019, up from $526,845 in 2018. The estimated cost of data loss also increased from $995,613 in 2018 to $1,013,075 in 2019. These costs are significantly higher for those organisations using more than one data protection vendor – nearly two times higher downtime-related costs and almost five times higher data loss costs, on average.

Emerging technologies challenge data protection solutions

As emerging technologies continue to advance and shape the digital landscape, organisations are learning how to use these technologies for better business outcomes. The study reports that almost all organisations are making some level of investment in newer or emerging technologies, with the top five being: cloud-native applications (58%); artificial intelligence (AI) and machine learning (ML) (53%); software-as-a-service (SaaS) applications (51%); 5G and cloud edge infrastructure (49%); and Internet of Things/end point (36%).

Yet, nearly three-quarters (71%) of respondents believe these emerging technologies create more data protection complexity while 61% state that emerging technologies pose a risk to data protection. More than half of those using newer or emerging technologies are struggling to find adequate data protection solutions for these technologies, including:

The study also found that 81% of respondents believe their organisations’ existing data protection solutions will not be able to meet all future business challenges. Respondents shared a lack of confidence in the following areas:

Data protection joins forces with cloud

Businesses are taking a combination of cloud approaches when deploying new business applications and protecting workloads such as containers and cloud-native and SaaS applications. The findings show that organisations prefer public cloud/SaaS (43%), hybrid cloud (42%) and private cloud (39%) as deployment environments for newer applications such as these. Also, 85% of organisations surveyed say it is mandatory or extremely important for data protection providers to protect cloud-native applications

As more data moves to, through and around edge environments, many respondents say cloud-based backups are preferred, with 62% citing private cloud and 49% citing public cloud as their approach for managing and protecting data created in edge locations.

“These findings prove that data protection needs to be central to a company’s business strategy,” said Phalen. “As the data landscape grows more complex, organisations need nimble, sustainable data protection strategies that can scale in a multi-platform, multi-cloud world.”

New data from Synergy Research Group shows that hyperscale operator capex in the fourth quarter was well over $32 billion, setting a new record for quarterly spending as it was marginally higher than Q4 of 2018 – the previous recordholder.

For the full year, total hyperscale operator capex was up just 1% from the prior year. However, their capex that was specifically targeted at data centers increased substantially, growing by 11% in 2019, reflecting ongoing strength in their core business operations. The top five hyperscale spenders in 2019 were Amazon, Google, Microsoft, Facebook and Apple, whose capex budgets far exceed the other hyperscale operators. In 2019 capex growth at Amazon, Microsoft and Facebook was particularly strong, while Apple’s capex dropped off sharply, dragging down the overall figures. Outside of the top five, other leading hyperscale spenders include Alibaba, Tencent, IBM, JD.com, Baidu and Oracle.

Much of the hyperscale capex goes towards building, expanding and equipping huge data centers, which grew in number to 512 at the end of Q4. The hyperscale data is based on analysis of the capex and data center footprint of 20 of the world’s major cloud and internet service firms, including the largest operators in IaaS, PaaS, SaaS, search, social networking and e-commerce. In aggregate these twenty companies generated 2019 revenues of almost $1.4 trillion, up 13% from 2018.

“As expected there was a significant boost in hyperscale operator capex in the second half of 2019, which helped to counter a relatively soft start to the year. Most notable was that annual spending on data centers grew at a double-digit rate despite total capex being somewhat flat,” said John Dinsdale, a Chief Analyst at Synergy Research Group. “How will coronavirus impact this trend going forwards? While there are many unknowns, what is clear is that the hyperscale operators generate well over 80% of their revenues from cloud, digital services and online activities. The radical shifts we are seeing in social and business behavior will actually provide some substantive tailwinds for many of these businesses. These hyperscale firms are much better insulated against the current crisis than most others and we expect to see ongoing robust levels of capex.”

Just 12% of more than 1,500 respondents believe their businesses are highly prepared for the impact of coronavirus, while 26% believe that the virus will have little or no impact on their business, according to a recent survey by Gartner, Inc. In a Gartner business continuity webinar on March 6, Gartner experts asked participants how prepared they are for impact of COVID-19.

“This lack of confidence shows that many organizations approach risk management in an outdated and ineffective manner,” said Matt Shinkman, vice president in the Gartner Risk and Audit practice. “The best-prepared organizations will manage the disruption caused by the coronavirus far better than their less-prepared peers.”

Most respondents (56%) rate themselves somewhat prepared, and 11% said they were either relatively or very unprepared. Just 2% of respondents believe their business can continue as normal, highlighting the huge range of businesses that could be affected by the outbreak. Twenty-four percent of respondents expect little disruption, while the majority expect business to continue at a reduced pace (57%), to be severely restricted (16%) or to be discontinued altogether (1%).

The challenge lies partly in the ambiguity inherent to managing an emerging risk such as coronavirus. Organizations often have policies in place to deal with most risks, but they don’t activate them until it’s too late because no one is owning the risk or taking it seriously until it is fully manifested. The threshold for a risk to generate executive action is often too high to enable an effective response.

“Board members tend to deal with emerging risks by just assuming they will go away and instead focus their attention on what is most important today,” said Mr. Shinkman. “In good times this methodology is reinforced because sometimes emerging risks really do just go away. It’s when they don’t that problems inevitably emerge.”

Having an enterprise risk management (ERM) function in place means that an organization is more likely to see risks coming and then mitigate the impact of those emerging risks more swiftly and effectively. Gartner’s view is that a focus on impacts rather than specific scenarios is best practice for ERM.

“It’s nearly impossible to predict exactly if or how a particular scenario will unfold or even when,” said Mr. Shinkman. “That’s what creates the ambiguity and often inaction around emerging risks. It’s much more effective to focus on potential impacts and how to mitigate them.”

Pandemic provides a perfect example of how this approach works – companies that wait until the emerging risk is already impacting operations and/or many employees will likely find themselves playing catch up and losing ground to companies that were better prepared.

Companies can get better prepared by considering what interim events could occur that would suggest that a pandemic, or similar emerging risk, is about to sharply increase in terms of its impact or likelihood. By using an ERM approach to identify and prepare for those specific events – and setting up mechanisms to monitor for them – the best companies are better positioned to avoid major disruption.

For those dealing with a crisis response to the coronavirus in their organization, they should have planned responses to specific impacts. For example, what will the company do if one employee gets sick? Ask all employees to self-isolate? Are work-from-home procedures sufficiently mature to support that or will work have to stop? Do suppliers or clients need to be notified? Is finance able to support operations in the event of anticipated losses?

Using an impacts-based method makes it very clear when to trigger a response plan and to start mitigating the effect of specific impacts on an organization. Also having response plans that react to specific impacts means it is simpler to communicate the plan to staff, so that all employees can play a part in managing risk. In fast-moving situations such as this, the more people who are owning risk, the more likely it is that an organizational response will be timely.

“Avoid constructing elaborate ‘what if?’ scenarios and focus on what is known,” said Mr. Shinkman. “Many organizations likely already have plans in place to deal with the types of disruption they are facing because of the coronavirus. The job of risk management is to ensure the right plans exists and make sure they get used at the appropriate moment.”

A five-phase strategic and systematic approach to strengthen the resilience of organizations’ current business models is key to continuity of operations during the coronavirus pandemic, according to Gartner, Inc.

“Companies tend to have traditional strategies and plans that focus on the continuity of the resources and processes but omit the business model,” said Daniel Sun, research vice president at Gartner. “However, the business model itself can be a threat to continuity of operations in external events, such as the global outbreak of COVID-19.”

CIOs can play a key role in the process of raising current business model resilience to ensure ongoing operations, since digital technologies and capabilities can influence every aspect of business models.

Phase 1 — Define the business model: Facing the contingency of COVID-19 outbreaks, companies should first focus on their core customers that are essential to their continuity of operations, and then refer to a process of defining their current business models by asking questions focused on their customers, value propositions, capabilities and financial models.

Although CIOs do not normally lead the process of defining business models, they should proactively engage with senior business leaders to run through 10 key questions regarding current business models. This is foundational for CIOs to actively participate in modifying current business models.

Phase 2 — Identify uncertainties: This step can be carried out through a strength, weakness, opportunity and threat (SWOT) analysis, or by brainstorming. Given the wide range of uncertainties and threats, this step can benefit from a heterogeneous group of participants with diverse backgrounds and interests, particularly where IT is normally involved. Companies should focus on the risks that the uncertainty poses to the components of the business model.

“CIOs should participate in, or coordinate, the brainstorming sessions to identify any uncertainties from COVID-19 outbreaks,” said Mr. Sun. “CIOs can share some of IT’s potential uncertainties and threats, such as issues with IT infrastructure, applications and software systems.”

Phase 3 — Assess the impact: Multidisciplinary members should form a project team to assess, or even quantify, the impact of the identified uncertainties. CIOs can provide the potential impacts from an IT perspective.

Phase 4 — Design changes: At this point in the process, the emphasis is to develop tentative strategies rather than estimate their feasibility. Selecting and executing changes will follow in the next phase. CIOs and IT should leverage digital technologies and capabilities to facilitate the designed changes.

Phase 5 — Execute changes: The decision on which changes to execute is principally a decision for senior leadership teams. The strategies for changes defined in Phase 4 provide essential input for this decision process. Senior leadership teams should select the strategies they feel most compelling to implement, which is often based on both economic calculations and intuition.

“Once senior leadership teams select the business and IT change initiatives, CIOs should apply an agile approach in executing the initiatives. For example, they can form an agile (product) team of multidisciplinary team members, enabling the alignment between business and IT and ensuring delivery speed and quality,” said Mr. Sun. “In crises such as the COVID-19 outbreak, agility, speed and quality are crucial for enabling the continuity of operations.”

Coronavirus exposes outdated risk management practices

Organizations’ current approach to risk governance is not sufficient to tackle the complex risk environment organizations are facing today, according to Gartner, Inc. The COVID-19 pandemic is just the latest in a line of recent risk events showing how organizations are not properly set up to manage risk, especially fast-moving ones.

Gartner research showed that 87% of audit departments say their organization uses a ”three lines of defense” (3LOD) model for risk governance. This model states that line management should act as the first line of defense, identifying risks and implementing controls. Risk and assurance functions such as legal, compliance and enterprise risk management (ERM) should act as a second line, overseeing and monitoring risk management processes. Finally, internal audit should act as a third line, taking a birds’ eye view of the effectiveness of controls and risk management.

“The response to the coronavirus pandemic is a perfect example of when the 3LOD and traditional risk governance don’t work very well,” said Malcolm Murray, vice president and fellow, research for the Gartner Audit and Risk practice. “Traditional approaches fail because they can’t effectively deal with fast-moving and interconnected risks. Pandemic is a rapidly developing type of risk that needs a dynamic risk governnance (DRG) set-up.”

“The coronavirus pandemic demonstrates why organizations need a new approach for governing the management of the many complex risks they face in today’s world,” said Mr. Murray. “Adopting the DRG principles helps organizations ensure they have the appropriate governance for different kinds of risks, with the right kind of risk management activities and the right people involved.”

Dynamic Risk Governance

The effectiveness of DRG was measured in a Gartner survey to over 200 organizations, looking at whether traditional or dynamic approaches to governing risk management led to better risk management behaviors and better risk outcomes. The three pillars of DRG each increased the occurrence of high-quality risk management behaviors:

· Risk-tailored governance (18% increase)

The governance model should depend on the risk’s speed, the organization’s risk tolerance and internal constraints rather than relying on a one-size-fits-all level of scrutiny, such as centralized oversight for all risks or models based on industry norms. Corporate leaders should have the final say here, because the governance model should be determined based on the company strategy. A benefit of placing this authority with senior management rather with than the board and the assurance functions is more rapid response. These top executives can take faster action.

· Activity-based risk governance (22% increase)

This means dispensing with the idea that only the first line owns all risk activities, and assigns accountability for risk management tasks without regard for the borders between first/second/third line. Senior management – not assurance functions – should determine who will decide the task owners for a particular risk. For some risks, it will not matter which exact function is accountable for each activity – as long as there is specific accountability assigned.

· Digital-first risk governance (18% increase)

This means considering digital solutions during creation of the governance framework for the risk, not as an afterthought. For instance, if large parts of the risk management can be automated, then fewer functions need to be involved.

When looking at the risks related to the coronavirus pandemic specifically, adopting the DRG principles is beneficial at all three stages of dealing with the risk – response, recovery and restoration. For the first stage, adopting DRG means quickly identifying who in senior management should own the governance of the risk and quickly setting up an initial governance model that considers the fast speed of the risk. It means identifying the key risk management activities for this stage of the risk and assigning clear accountability for these to appropriate parties.

In subsequent stages, when attention shifts towards recovery and restoration, applying the DRG principles allows organizations to regularly revisit whether the risk is governed in the right way. Once there is more visibility to the path of the risk, additional risk management activities can be added, such as adding a focus on monitoring the risk and assessing longer-term impact.

“This isn’t just about risk managers, this is about the board of directors and senior management making risk governance a key consideration so that organizations become more resilient against fast-emerging risks, such as coronavirus,” said Mr. Murray. “The DRG methodology applies equally to the many fast-emerging risks presented by digitalization.”

As COVID-19 coronavirus spreads globally, Gartner, Inc. has identified three impact areas for customer service and support leaders to focus on to manage risk and ensure continuity of operations.

“Though service leaders are familiar with business continuity and disaster recovery planning, pandemic planning is very different because of its wider scope and the uncertainty of impact,” said John Quaglietta, senior director analyst in Gartner’s Customer Service and Support Practice. “The global and dynamic impact of COVID-19 requires planning for longer recovery times and many scenarios because pandemic events are so fluid, and things can change quickly without notice.”

The three impact areas that Gartner recommends that service leaders focus on include:

Impact Area 1: Operational Continuity

Continuity of operations in service and support organizations is largely delivered by agents, operations staff and management. However, this is being threatened by increased absence due to quarantines.

“Since service and support are labor-intensive, having large numbers of staff miss work due to pandemic-related issues can severely impact delivery,” said Deborah Alvord, senior director analyst in Gartner’s Customer Service and Support Practice. “Delivery impairment has both short- and long-range effects on organizations’ ability to deliver service to customers and meet related service goals according to customer expectations.”

Service leaders should maintain continuity of business operations by completing a workforce planning assessment and determine outsourcing and work-from-home options. Additionally, they should implement and promote digital and self-service channels.

Impact Area 2: Staff Morale

Unpredictable work conditions create additional pressure and demand on employees, fueling anxiety, morale and retention issues. Gartner recommends service leaders establish programs that promote employee well-being, focus on employee engagement, and include employees in business continuity and disaster recovery planning.

Impact Area 3: Customer Demand

Communicating with customers during the life cycle of the pandemic is critical. It is important for service leaders to consistently provide updates on developing events and how those events affect the organization’s ability to provide service and support. If customers should expect delays, let them know in advance to reduce unneeded contact volume. Where possible, service leaders should use a multichannel strategy to communicate updates, process or policy changes, and changes in service caused by COVID-19. This can be done via inbound and outbound channels such as SMS, Interactive Voice Response (IVR) and phone.

Worldwide server revenue update

The worldwide server market continued to grow in the fourth quarter of 2019 as revenue increased 5.1% and shipments grew 11.7% year over year, according to Gartner, Inc. In all of 2019, worldwide server shipments declined 3.1% and server revenue declined 2.5% compared with full-year 2018.

"The market returned to growth with a very strong fourth quarter result, largely driven by a return of demand from hyperscalers,” said Adrian O’Connell, senior research director at Gartner. “However, the outlook for the worldwide server market in 2020 is subject to great uncertainty. The impact of the coronavirus (COVID-19) outbreak is expected to temper forecast growth. Although demand from the hyperscale segment is expected to continue through the first half of the year, other buying organizations’ reactions will vary.”

Dell EMC secured the top spot in the worldwide server market based on revenue in the fourth quarter of 2019 (see Table 1). Despite a decline of 9.9% year over year, Dell EMC secured 17.3% market share, followed by Hewlett Packard Enterprise (HPE) with 15.4% of the market. IBM experienced the strongest growth in the quarter, growing 28.6%.

Table 1

Worldwide: Server Vendor Revenue Estimates, 4Q19 (U.S. Dollars)

Company | 4Q19 Revenue | 4Q19 Market Share (%) | 4Q18 Revenue | 4Q18 Market Share (%) | 4Q19-4Q18 Growth (%) |

Dell EMC | 3,986,574,446 | 17.3 | 4,426,376,226 | 20.2 | -9.9 |

HPE | 3,551,891,310 | 15.4 | 3,887,881,501 | 17.8 | -8.6 |

IBM | 2,294,258,503 | 10.0 | 1,783,691,221 | 8.1 | 28.6 |

Inspur Electronics | 1,831,676,801 | 8.0 | 1,801,622,141 | 8.2 | 1.7 |

Huawei | 1,488,740,004 | 6.5 | 1,815,071,726 | 8.3 | -18.0 |

Others | 9,860,302,857 | 42.8 | 8,186,405,788 | 37.4 | 20.4 |

Total | 23,013,443,922 | 100.0 | 21,901,048,604 | 100.0 | 5.1 |

Source: Gartner (March 2020)

In server shipments, Dell EMC maintained the No. 1 position in the fourth quarter of 2019 with 14.2% market share (see Table 2). HPE secured the second spot with 10.8% of the market. Both Dell EMC and HPE experienced declines in server shipments, while Lenovo experienced the strongest growth with a 22.4% increase in shipments in the fourth quarter of 2019.

Table 2

Worldwide: Server Vendor Shipment Estimates, 4Q19 (Units)

Company | 4Q19 Shipments | 4Q19 Market Share (%) | 4Q18 Shipments | 4Q18 Market Share (%) | 4Q19-4Q18 Growth (%) |

Dell EMC | 549,552 | 14.2 | 580,580 | 16.7 | -5.3 |

HPE | 417,699 | 10.8 | 424,422 | 12.2 | -1.6 |

Inspur Electronics | 296,934 | 7.7 | 293,702 | 8.5 | 1.1 |

Huawei | 267,157 | 6.9 | 260,193 | 7.5 | 2.7 |

Lenovo | 233,893 | 6.0 | 191,032 | 5.5 | 22.4 |

Others | 2,113,167 | 54.5 | 1,723,032 | 49.6 | 22.6 |

Total | 3,878,402 | 100.0 | 3,472,961 | 100.0 | 11.7 |

Source: Gartner (March 2020)

Full-Year 2019 Server Market Results

In 2019, both worldwide server shipments and revenue declined, with shipments falling 3.1% and revenue down 2.5%

As for vendor performance, Dell EMC took the top spot in both revenue and shipments with 20.5% market share and 16.3% market share, respectively. HPE secured the No. 2 position with market share of 17.3% in revenue and 12.3% in shipments. Inspur Electronics is the only vendor in the top five that grew in both revenue and shipments in 2019.

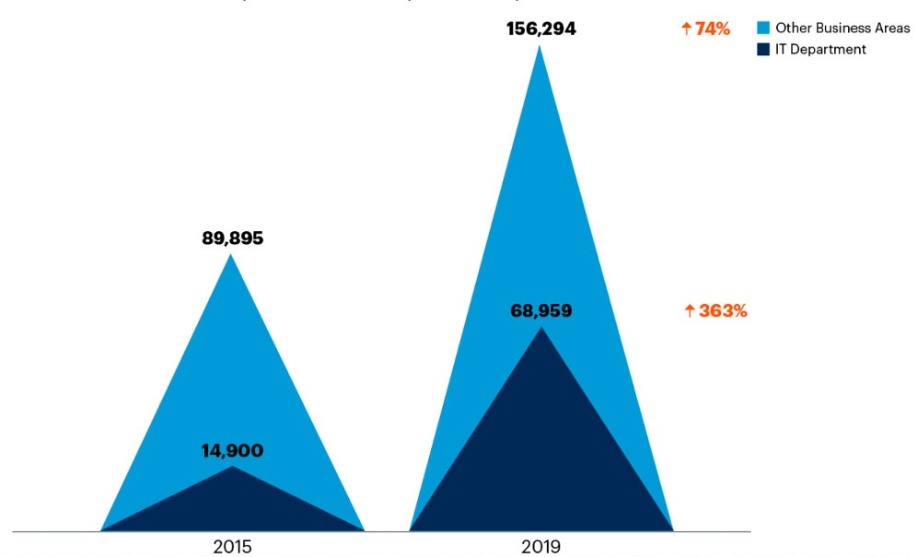

Strongest demand for AI talent comes from non-IT departments

For the past four years, the strongest demand for talent with artificial intelligence (AI) skills has not come from the IT department, but rather, from other business units in the organization, according to Gartner, Inc.

Gartner Talent Neuron data shows that although the IT department’s need for AI talent has tripled between 2015 and 2019, the number of AI jobs posted by IT is still less than half of that stemming from other business units (see Figure 1).

Figure 1: Total AI Jobs Posted in Top 12 Countries by GDP, July 2015 Through March 2019

Note: The top countries are derived from the IMF 2019 ranking of countries by total GDP, excluding Italy, Spain and South Korea due to limited time series data.

Source: Gartner Talent Neuron (March 2020)

“High demand and tight labor markets have made candidates with AI skills highly competitive, but hiring techniques and strategies have not kept up,” said Peter Krensky, research director at Gartner. “In the recent Gartner AI and Machine Learning Development Strategies Study, respondents ranked “skills of staff” as the No. 1 challenge or barrier to the adoption of AI and machine learning (ML).”

Departments recruiting AI talent in high volumes include marketing, sales, customer service, finance, and research and development. These business units are using AI talent for customer churn modeling, customer profitability analysis, customer segmentation, cross-sell and upsell recommendations, demand planning, and risk management.

A significant portion of AI use cases are reported from asset-centric industries supporting projects such as predictive maintenance, workflow and production optimization, quality control and supply chain optimization. AI talent is often hired directly into these departments with clear use cases in mind so that data scientists and others can learn the intricacies of the specific business area and remain close to the deployment and consumption of their work.

“Given the complexity, novelty, multidisciplinary nature and potentially profound impact of AI, CIOs are well-placed to help HR in the hiring of AI talent in all business units,” said Mr. Krensky. “Together, CIOs and HR leaders should rethink what skills are truly necessary for an AI-focused employee to have on Day 1 and explore candidate criteria adjacent to hiring specifications. CIOs should also think creatively about IT’s role in governing and supporting diverse AI initiatives and the evolving teams driving this activity.”

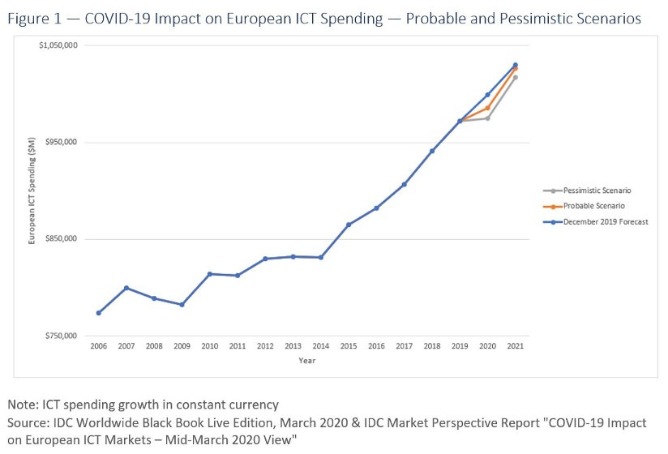

European total ICT spending growth for 2020 revised down from 2.8% to 1.4% in the most probable IDC European research scenario.

The coronavirus outbreak across European countries and the necessary containment measures put in place by governments will substantially affect European ICT markets, accelerating the impact already felt from the shocks in Asia. In this extremely fluid scenario, International Data Corporation (IDC) expects to see a significant slowdown in technology spending in 2020 across European organizations, with regional ICT spending growth rates for 2020 expected to halve from 2.8% to 1.4% compared to the December 2019 forecast, as the crisis seeps into virtually all European economies.

"European Technology vendors and buyers are rapidly adapting to the disruption and the extremely fast-moving market conditions," said Thomas Meyer, general manager and GVP research at IDC Europe. "In such a fluid scenario, it is still early to fully assess the overall European ICT impact picture. IDC recommends that all technology leaders recalibrate their strategies. In use cases such as patient care as well as customer, citizen, student or employee experience and proximity, we expect to see accelerated adoption of digital solutions."

"To help technology providers and buyers with their short-term business and technology investment planning, we have developed two scenarios for Europe: a probable one in which the extent of the coronavirus is broadly contained in the next few weeks, and a pessimistic one that considers a less controlled 'domino' effect on a global scale," said Philip Carter, chief analyst at IDC Europe.

In the most probable IDC scenario, European ICT spending is projected to grow by 1.4% in constant currency terms this year, down from the 2.8% forecast published at the end of 2019 in the IDC Worldwide Black Book Live Edition.

"When taking a broad historical view of European ICT spending across the past decade, the impact of the COVID-19 crisis has not reached the levels of the 2007–2008 financial crisis yet. However, it does represent the first strong deceleration in spending growth since the European debt crisis in 2013-2014," said Giorgio Nebuloni, AVP at IDC Europe.

The new outlook is shaped primarily by lower expectations in the hardware and services markets:

Impacts on the software and telecoms markets are less evident and some positive factors are expected to negate to a large extent the natural downturn. While the decrease in hardware spending will also negatively impact the overall software market to a degree, difficulties prompted by COVID-19 across industries will impact total telco connections. At the same time, the increasing need for remote collaboration will push telco services demand and drive new opportunities in the collaborative applications and platforms areas, as well as an increase in security technologies that enable them.

New Use Cases for Technology Will Emerge Quickly

"Factors weighing on investment will range from a decrease in customer demand to supply chains breaking up," said Carla La Croce, Senior Research Analyst at IDC Europe. "Nevertheless, there are areas in which spending will grow. There are specific solutions and use cases, such as videoconferencing, intelligent supply, chatbots, and elearning platforms among others, highlighting how technology can help businesses and societies face (and hopefully surmount) these new challenges."

One of the most pertinent examples is the ability to contain the COVID-19 outbreak itself with the use of artificial intelligence (AI). The report by the WHO-China Joint Mission on COVID-19 highlights how the use of big data and artificial intelligence technologies were applied "to strengthen contact tracing and the management of priority populations." Researchers have also been starting to use deep learning techniques to support COVID-19 detection when analyzing CT scans and patient records. IDC believes some of these use cases could be observed in Europe over the next few weeks, albeit at a smaller scale."

A Pessimistic Scenario Depicting no Growth

In the most pessimistic scenario, IDC expects European ICT spending to drop to a near-flat 0.2% growth in 2020, with all technology domains but software showing negative trends for the remaining part of the year. A series of domino effects, including oil price changes, currency depreciation, the inability of governments to make timely payments, delays in the supply chains and lay-offs in both public and private sectors would lead to a much more dramatic impact on the overall ICT European market and an exponential increase in the downside risk in IDC Market Forecast assumptions.

As restrictions of movement bite, supply-chain disruption becomes commonplace and demand drops, European IT spend in manufacturing, personal and consumer services, transportation, and hospitality will be strongly curbed, as these industries are the most exposed to the COVID-19 crisis impact in the short-, mid-, and long-term view. At the same time, other industries, such as healthcare and government, will be forced to accelerate investments in such a contingent situation. IDC expects this will drive additional IT investments for the public sector, pushing hard on infrastructure and collaboration tools deployments, but not before the second half of 2020.

The pre-existing digital maturity of industries will also be a factor impacting on their capacity to invest in technologies, regardless of their effective budget capabilities. Limited face-to-face business relationships between vendors and end users will inevitably reduce investment in significant digital transformation projects in less mature industries. This is particularly true for projects involving more advanced technologies. This reduced social contact (the duration of which is hard to predict) will also have significant consequences on the purchasing options of a good portion of consumers. Those consumers, especially in less digital-savvy countries, will be gradually excluded from access to technological innovations.

Server and storage markets to decline

End user spending on IT infrastructure (server and enterprise storage systems) will decline in 2020 as a result of the widespread coronavirus pandemic. According to the International Data Corporation (IDC) Worldwide Quarterly Server Tracker and Worldwide Quarterly Enterprise Storage Systems Tracker, under the current probable scenario server market revenues will decline 3.4% year over year to $88.6 billion and external enterprise storage systems (ESS) revenues will decline 5.5% to $28.7 billion in 2020. The server market is expected to decline 11.0% in Q1 and 8.9% in Q2 and then return to growth in the second half of the year. The external ESS market is forecast to decline 7.3% in Q1 and 12.4% in Q2 before returning to slight growth by the end of 2020 with further recovery expected in 2021.

IDC developed three forecast scenarios (optimistic, probable, and pessimistic) for the impact of COVID-19 on the IT infrastructure markets. The probable scenario assumes a broad negative impact starting in China and spreading into other regions before slowing toward end of the year. Elements of the impact include changing demand expectations from various groups of IT buyers, supply chain shortages and logistical delays, short-term component price increases, and a suppressed economic and social climate. The current forecast is based on the probable scenario as of March 26, 2020. However, as the situation continues to unfold, the forecasts might be adjusted further.

The fast-changing environment has revealed some remarkable differences in how the pandemic has affected various segments of the market. As the first to be hit by the coronavirus, China will see the greatest negative impact in the first quarter of 2020 while other regions will start to experience the impact in the second quarter. Similarly, some industries (transportation, hospitality, retail, etc.) are facing significantly reduced consumer activity and business closures and others are being hit by an unexpected wave of demand for services, including video streaming, Web conferencing, and online retail. Facing economic uncertainty, many businesses are being forced to consider more expedited adoption of cloud services to fulfill their compute and storage needs. This spike in demand put unplanned pressure on the IT infrastructure in cloud service provider datacenters leading to growing demand for servers and system components. As a result, the IT Infrastructure market has two submarkets going in different directions: decreasing demand from enterprise buyers and increasing demand from cloud service providers. This dynamic is impacting the server market the most, resulting in just a moderate decline for the overall market in 2020. The external storage systems market, with a higher share of enterprise buyers, will experience a deeper decline in 2020.

Worldwide End User Spend on Servers, 2019, 2020 and 2024 and Five-Year CAGR (value in $ billions) | ||||||

IT Infrastructure Market | Market Segment | 2019 Value | 2020 Value | 2020 Growth | 2024 Value | 2019-2024 CAGR |

Servers | x86 | $83.8 | $81.9 | -2.2% | $109.8 | 5.6% |

non-x86 | $8.0 | $6.7 | -16.0% | $6.8 | -3.3% | |

Total Server | $91.7 | $88.6 | -3.4% | $116.6 | 4.9% | |

Source: IDC Worldwide Quarterly Server Tracker, March 26, 2020 | ||||||

Worldwide End User Spend on External Enterprise Storage Systems, 2019, 2020 and 2024 and Five-Year CAGR (Value in $ billions) | ||||||

IT Infrastructure Market | Market Segment | 2019 Value | 2020 Value | 2020 Growth | 2024 Value | 2019-2024 CAGR |

External ESS | External RAID | $30.0 | $28.3 | -5.7% | $32.0 | 1.3% |

Storage Expansion* | $0.4 | $0.5 | 9.6% | $0.4 | -2.4% | |

Total External ESS | $30.4 | $28.7 | -5.5% | $32.4 | 1.3% | |

Source: IDC Worldwide Quarterly Enterprise Storage Systems Tracker, March 26, 2020 | ||||||

* Note: Storage Expansion category includes OEM and ODM Storage Expansions.

"The impact of COVID-19 will certainly dampen overall spending on IT infrastructure as companies temporarily shut down and employees are laid off or furloughed," said Kuba Stolarski, research director, IT Infrastructure at IDC. "While IDC believes that the short-term impact will be significant, unless the crisis spirals further out of control, it is likely that this will not impact the markets past 2021, at which point we will see a robust recovery with cloud platforms very much leading the way."

In the longer term both markets will return to growth. The server market is expected to deliver a compound annual growth rate (CAGR) of 4.9% over the 2019-2024 forecast period with revenues reaching $116.6 billion in 2024. Meanwhile the external ESS market will see a five-year CAGR of 1.3% growing to $32.4 billion in 2024.

"The IT infrastructure markets are already going though a transformation and shifts in end user spending will bring an even faster changing IT buyer landscape," said Natalya Yezhkova, research vice president, IT Infrastructure. "While the current crisis brings tensions and uncertainty to the market, it also will push organizations to expedite adoption of technologies and IT delivery models that help with optimization of IT infrastructure resources."

Enterprises move to augmented and virtual reality

According to the latest IDC Worldwide Augmented and Virtual Reality Spending Guide, Asia/Pacific spending on augmented reality and virtual reality (AR/VR) is forecast to be $3.7 billion in 2019, an increase of 34.0% from the previous year. Asia/Pacific spending on AR/VR products and services will continue this strong growth throughout the forecast period (2018-23), with a five-year compound annual growth rate of 62.0%. This growth is primarily driven by commercial industries which are going to be more than $11 billion larger than the consumer segment by the end of the forecast (2018-23). Despite this, the consumer segment (which is currently at $1.7 billion in 2019) continues to be larger than any other industry segment over the forecasted period.

The high growth in the commercial segment is primarily due to the AR/VR capability to solve complex business problems and streamline operations. The two industries that are seeing the most activities/implementation in Asia/Pacific are education (US$ 495.3 million in 2019) and retail ($244.4 million in 2019), spending the most in this technology among other industries.

“Specialized training programs in the education system that includes VR pilot training through simulations, learning of human anatomy, etc. have given an opportunity to develop a specific skill set in the virtual environment. Leveraging this technology, the chances of making errors will not have fatal consequences during the training process. This has turned out to be a huge transition for institutes to save time for distance learning purposes and help in reducing cost due to the travel expenses incurred on students. Similarly, high-end retailers came across improvised customer engagement programs using this technology. This has also helped them in delivering the products based on customizing to a specific customer’s choice with the same or less time and effort. The technology has seen an increase in consideration and solutions around Online retail showcasing, retail showcasing, and virtual test drive,” says Ritika Srivastava, Associate Market Analyst at IDC India.

Despite the fact that the two industries have the highest market spend, there are other industries that have high potential to grow at a faster pace over the forecast period (2018-23) – with some of the new use cases in the pipeline. Retail (94.8% CAGR), followed by utilities, securities, investment services, and process manufacturing are the industries that are gaining momentum to explore the new use cases, and are lucrative in terms of investments. Use cases that dealt in operational tasks with the help of the Augmented Reality for performing tasks like assembly, maintenance, and repair have a lot of impetus within the industries.